Wearable Metrics Aren't Training Data. Here's What Actually Matters.

CES 2026 rolled through like it always does—a fever dream of carbon fiber prototypes and press releases with words like "predictive," "AI-powered," and "personalized algorithm." Garmin dropped new flagship hardware. Apple Watch Ultra is apparently going to save your kidneys. Whoop's latest generation claims it can tell you, with stunning precision, that you're tired.

Here's the thing: I spent five years training people with $150k/year incomes and $2,000+ wearable setups. These were not casual gym-goers. They were driven, detail-oriented, and absolutely drowning in data. And almost to a person, they were obsessing over metrics while avoiding the actual work that would move the needle.

So before you drop $400 on a device because the booth at CES had nice lighting, let's run the numbers through a filter.

The Signal vs. Noise Problem

A dashboard is not a training plan.

This sounds obvious. It isn't. When a device gives you 47 metrics—sleep score, HRV, respiratory rate, SpO2, skin temperature deviation, readiness score, body battery, training load, acute:chronic workload ratio—your brain does a thing it always does: it treats quantity of information as quality of information.

It isn't.

Plews et al. published a case-comparison study on HRV in elite triathletes that captures what most sports scientists will tell you quietly at conferences: HRV is an indicator, not a prescriptor. It tells you that something happened—accumulated stress, poor sleep, an immune response, too much caffeine too close to bed—but based on available evidence, it has limited predictive validity for what to do about it. Yet wearable apps will tell you to "rest" based on that number. How does the algorithm know the HRV dip wasn't from a glass of wine and a hard conversation, rather than actual physiological overreach? It doesn't. It can't. That's not how the math works.

The wearable industry has done a masterful job conflating correlation with causation. HRV is correlated with recovery status. It is not a direct measure of recovery status. Sleep duration is correlated with cognitive performance. It is not a direct measure of whether you're recovered enough to squat 90% today.

More data points don't fix this problem. They compound it.

The Anxiety Machine Trap

Here's something nobody in the wearable industry will say in a press release: their products are designed to alarm you.

Not because they're evil. Because an alarmed user is an engaged user. Engagement metrics look good on slides to investors. But alarm tends to be the opposite of what athletes need.

Sleep score drops two points. HRV is "below baseline." Readiness is 62 instead of 74. Now you're anxious before you've touched the barbell. You modify the session. You back off intensity that would've been fine. You miss the training stimulus.

Iyengar and Lepper (2000)—the classic decision paralysis research—showed that more choices tend to produce worse decisions and lower satisfaction. The mechanism applies plausibly here: more metrics don't necessarily sharpen your training decisions. They create friction. They invite second-guessing. An athlete who felt fresh walking into the gym leaves the parking lot to "take a rest day" because an algorithm said so.

I've watched this happen in real time. Client walks in, moved well in warmup, bar speed looked good, RPE on early sets was around 8—all green lights by any honest measure. But their sleep score was 68. We spent 20 minutes trying to talk them out of their own data. Wasted half the training window on anxiety management.

What Wearables Are Actually Good For

I'm not going to pretend they're useless. Some of what they track is genuinely valuable—with caveats the companies will never put in the marketing copy.

Consistency tracking. Did you hit the stimulus range? Yes or no? This is probably the most underrated use case. Wearables are decent at tracking whether you showed up and moved enough to constitute a real session. For non-competitive athletes managing health across a busy life, this is legitimately useful.

Aggregated sleep trends. Note the word aggregated. Multiple independent validation studies comparing consumer wearable sleep staging to polysomnography have found that accelerometer-based devices systematically misclassify sleep stages—particularly underestimating slow-wave sleep, with error margins that vary substantially across devices and populations (de Zambotti et al., 2019; Chinoy et al., 2021). The absolute nightly numbers from your wrist sensor are noisy. But a 4-week trend of declining sleep duration is real signal. Weekly averages. Not last night's score. Stop looking at it every morning. Understanding sleep as a true anabolic stimulus is crucial to using this data honestly.

Post-workout recovery window framing. Not real-time optimization. Not "your HRV says don't train." More useful for knowing roughly how far out from a hard session you are, informing weekly planning decisions rather than daily panic.

Load management in high-volume blocks. Research on load monitoring in team sports shows utility at the program level over weeks and months—not as a daily override switch. If you're running 60+ miles a week building toward a marathon, tracking weekly load accumulation is meaningful. Using it to skip Tuesday's easy run because your "body battery" is at 43 is not.

What the Evidence Says They Cannot Do Well

Nobody at a wearable press event will say this. I'll say it.

Wearables cannot reliably predict injury. The research base is genuinely weak. The acute:chronic workload ratio—which much of the industry leaned on for years as a predictive injury tool—has been repeatedly challenged in the literature. Correlation in retrospective studies does not equal predictive validity in prospective ones. Your wearable has no idea that you're compensating a left hip shift that's been loading your right knee wrong for three months. A coach who watches you move does.

Wearables have limited ability to adjust your training in real time. The inputs they'd need—RPE, bar speed, movement quality, technical breakdown under fatigue—aren't things a wrist sensor can capture. An RPE of 8 on your fourth set tells you something a heart rate number can't. Bar speed slowing past your threshold with no degradation in technique tells you something different than bar speed slowing with a forward lean. You need eyes and felt sense for that. Not an algorithm.

Wearables are not a substitute for a coach who actually watches you move. This one should be obvious. It's apparently not, because people keep spending $400 on watches instead of $100/month on a competent coach who corrects their squat depth.

Wearables cannot account for contextual life stress. Job deadline week. Family conflict. Three back-to-back red-eye flights. Caffeine timing. All of these affect HRV, sleep quality, and perceived recovery—and your device has no idea which variable is driving the signal. This is not a solvable engineering problem with a better sensor. It's a fundamental limit of what the device can observe.

The Filter Test: How to Audit a Wearable Company's Claims

When a company says "AI-powered" in a fitness context, I want you to ask one question: powered by what data, validated in what study, on what population?

This is the same BS-meter audit framework I use to evaluate supplement claims. Here's how it applies to wearables:

"AI-powered / personalized algorithm" — Does this company publish peer-reviewed validation of the algorithm? Not white papers. Not "clinical studies." Peer-reviewed, independently replicated research. Some established players have published validation work. Most CES entrants have none. If they can't point you to PubMed, it's a marketing word.

"Predictive readiness / injury prevention" — Predictive validity in what sample? At what sensitivity and specificity? These are not unreasonable questions—they're basic biomarker validation criteria. If the answer is "our users report feeling better," that's not a study. That's a testimonial.

"Tracks your sleep stage / VO2max / lactate threshold" — Validated against what gold standard? VO2max estimates from wrist HR carry wide error bars. Lactate threshold from HR data is a rough proxy at best. Sleep staging from an accelerometer and skin temperature is noisy. Not useless—noisy. There's a difference.

"Personalized to you" — Personalized from what baseline? After how many data points? This one is usually the vaguest. "Personalized" often means "your data is in the model." That is not the same as a model calibrated to your individual physiology.

If a company's marketing can't survive four basic methodology questions, their product isn't tracking data. It's performing wellness.

What I Actually Use

I get asked this constantly, so I'll give it to you straight.

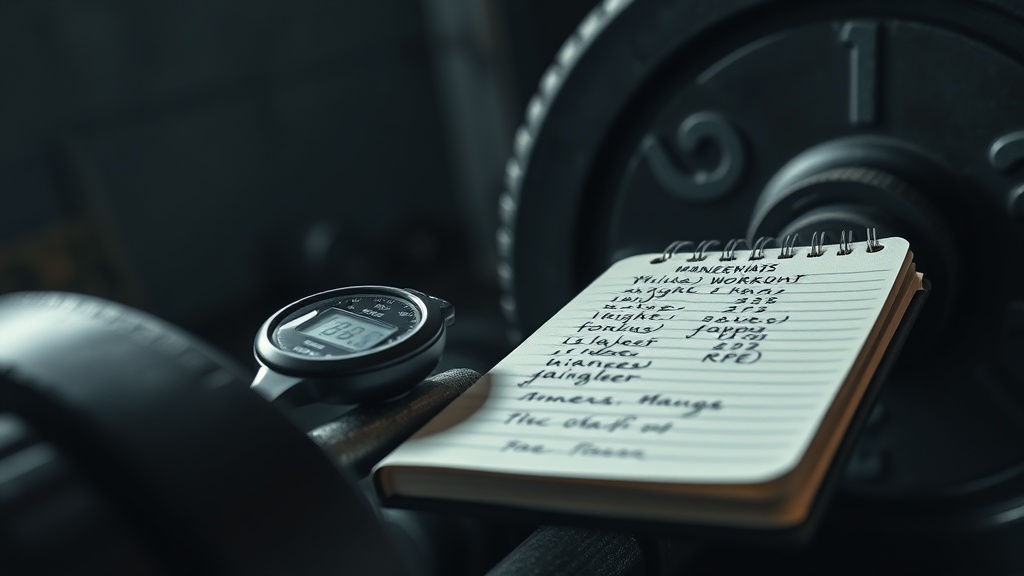

Physical notebook. A cheap Moleskine. I log every set: weight, reps, RPE, a one-word note on how the movement felt (locked, drifting, sharp, dead). That's my primary data source. You cannot replace it with a phone app. The act of writing by hand forces you to evaluate the set before you write it down. That's the point.

Stopwatch. Not a watch with a GPS route and a VO2max estimate. A stopwatch. For rest intervals, primarily. Keeping rest consistent is how you make training load comparable across sessions.

RPE scale. Session average RPE tracked weekly. If my average session RPE is drifting down while weight stays flat—something's wrong. If it's going up without corresponding strength gains—I'm probably under-recovering.

One aggregated sleep metric. I use a cheap sleep tracker purely for weekly average sleep duration. Nothing else. I don't look at it daily. I review it Sunday morning when I plan the coming week.

Bar speed. For strength work, I track concentric velocity on primary lifts using a velocity tracker. Not because the absolute numbers are sacred, but because velocity-based thresholds tell me when I'm meaningfully fatigued within a session and should stop adding load. It's the one wearable-adjacent tool I'll defend in court.

That's the whole stack. Everything else is noise.

Spring is here. CES just happened. Your Instagram feed is going to be flooded with athletes wearing matching gear and crediting their readiness score for their PR.

Don't buy the bit.

The best training data you have is your body under load, observed honestly. The best wearable upgrade you can make is buying a notebook and a pen and actually writing down what happened in the session.

If you're going to drop money on a device, run it through the filter test first. Ask for the validation study. Ask what the error bars are on the sleep staging. Ask what the algorithm was trained on. If the company can't answer those questions—or answers them with marketing language—put the credit card away.

Your optimization should be in the stimulus. Not in the monitoring of the stimulus.

References: Plews DJ, Laursen PB, Kilding AE, Buchheit M. Heart rate variability in elite triathletes, is variation in variability the key to effective training? A case comparison. European Journal of Applied Physiology (2013)—verify volume/page numbers at publish. | de Zambotti M, Rosas L, Colrain IM, Baker FC. The sleep of the ring: comparison of the ŌURA sleep tracker against polysomnography. Behavioral Sleep Medicine. 2019;17(2):124–136. | Chinoy ED, Cuellar JA, Huwa KE, et al. Performance of seven consumer sleep-tracking devices compared with polysomnography. Sleep. 2021;44(5):zsaa291. | Iyengar SS, Lepper MR. When choice is demotivating: can one desire too much of a good thing? Journal of Personality and Social Psychology. 2000;79(6):995–1006.